Over the last few years technology has advanced enough to facilitate the most ambitious of

crazy ideas which earlier generations never thought would be possible. In particular Neural

Networks have helped realize some of the most ambitious inventions ever like self-driving

cars, smart assistants and health-care diagnosis which have improved quality of human life

signficantly.

Questionable technology

DeepNude

Adobe VoCo

VoCo is a software that can alter voice recordings to include words or phrases never originally uttered by the speaker. All that is needed for this Neural Network developed by Adobe Research, is a 20 min recording of the speaker.

“We have already revolutionised photo editing. Now it’s time for us to do the audio stuff,” said Adobe’s Zeyu Jin after the appalling demo. A company of talented individuals who failed to evalute the ethical issues. Court testimonials, Media, Journalism, Hell - even Voice Calls… Nothing can ever be trusted again.

Boeing 737 Crash

The Boeing 737 crashes that took away the lives of 100s of people was the direct consequence

of conscious poor design choices by the engineering team.

Boeing was under pressure to match its competitors’ latest engine. Their newly developed

engine couldn’t be installed on existing planes due to its larger size. Instead of

changing the design, they mounted the engine higher up on the wing

messing up the aerodynamics of the aircraft. This would tilt the aircraft upwards causing

it to climb up. They developed a software solution, MCAS which would force the plane down

whenever this was detected. The pilots weren’t trained about MCAS as the engine needed to

be advertised as an upgrade which requires no re-training.

With a slew of poor engineering choices already in the bag, they decided to use only 1 sensor to feed MCAS rather than the 2 available. Since these sensors are not very robust, redundant sensors are usually used to avoid false-positives from erroneous data. But not Boeing. The safety system that analyzed both sensors to indicate errors was offered as a “paid addon”. In-flight, MCAS wrongly sensed that the aircraft was climbing up and chose to lower a perfectly stable aircraft causing it to crash. With no security system in place and pilots having no knowledge about MCAS they watched the aircraft crash.

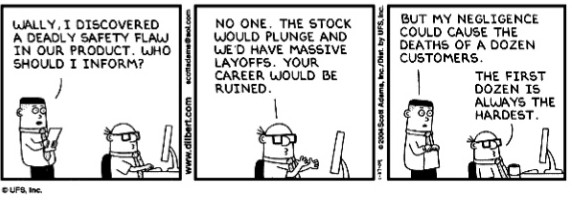

Would you or your family use what you built ?

Need for Policy & Regulation

I keep coming back to the same questions. If my rifle claimed people’s lives, can it be that I…, an Orthodox believer, am to blame for their deaths, even if they are my enemies?

– Mikhail Kalashnikov - Inventor of AK-47